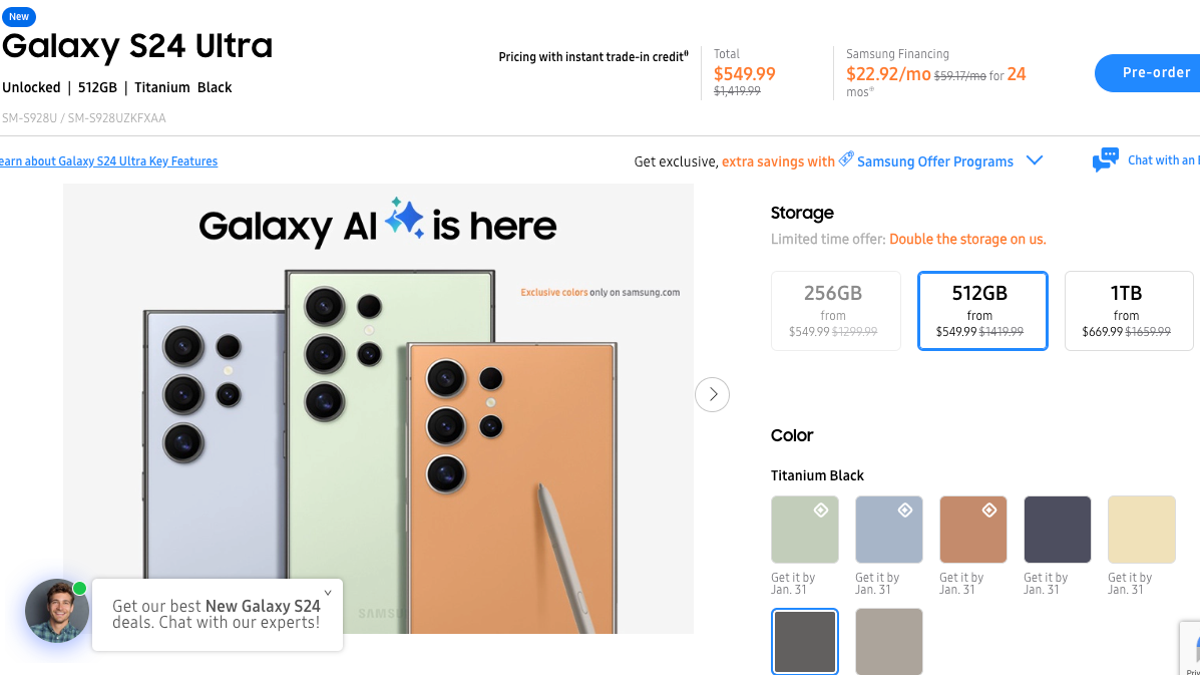

Ed Newton-Rex, a former TikTok head AI designer and executive at Stability AI, raised concerns about the ethics surrounding generative AI. Following his departure from Stability AI due to disagreements over data collection practices, Newton-Rex initiated discussions on ethical AI development. He observed a growing interest in utilizing generative AI models that prioritize fair treatment of creators and provide enhanced decision-making tools.

In response to these concerns, Newton-Rex established a nonprofit organization called Fairly Trained. This organization introduces a certification program, known as L Certification, aimed at recognizing AI companies that ethically license their training data. The certification process requires companies to demonstrate that their training data is either explicitly licensed, in the public domain, obtained through suitable open licenses, or internally owned.

Fairly Trained has granted certification to nine companies, including Bria AI and LifeScore Music, which source data from licensed sources like Getty Images and major record labels, respectively. The certification process incurs a fee ranging from \(500 to \)6,000 based on the company’s size.

Contrary to OpenAI’s assertion that using unlicensed data is unavoidable in developing generative AI services, Newton-Rex and the certified companies advocate for data licensing compliance. They view licensing data as a fundamental requirement, likening AI models trained on unlicensed data to Napster and emphasizing the importance of provenance and rights in the music industry.

Although Fairly Trained has garnered support from trade groups and industry players, the movement to reform the AI industry’s data acquisition practices is in its early stages. Newton-Rex, operating solo, remains committed to his startup ethos of swift execution and plans to expand the certification offerings in the future, potentially addressing compensation issues.

The concept of labeling AI products with data transparency information, as championed by Fairly Trained, resonates with industry experts like Howie Singer, who draws parallels to similar initiatives such as the Content Authority Initiative by Adobe. While certain informed groups may value ethical data certifications, the broader public’s awareness and interest in these practices may vary.

Neil Turkewitz, a copyright activist, acknowledges the significance of Fairly Trained’s certification but suggests that it should encompass broader considerations beyond data sourcing legality. Newton-Rex acknowledges this feedback and envisions introducing additional certificates in the future to address concerns like compensation, aiming to contribute positively to the ongoing dialogue on AI ethics.